The Penguin Men

About

Follow our team as we compete in MoonBots 2012! We are Asa, Kyle, and Matt (Captain). Email: matt@mattjensen.com

Monday, December 17, 2012

Demo at New Horizon School

Matt gave a demo of our Inspiration rover last week, at New Horizon School in Renton, WA. He also talked about the engineering mindset, and careers in technology. The middle school students had great questions, and helped Matt diagnose a problem with the rover!

Monday, December 10, 2012

Highlight Video

Here's a five-minute version of the highlights of our rover, "Inspiration". For details about our mission, live webcast, our software which we've made Open Source, and photos, see this blog post.

Friday, November 16, 2012

FINAL JUDGING -- Documenting Our Work

Just a few follow-up items, as MoonBots 2012 judging finishes...

Archival Video

Our webcast on 2012-11-11 did not record. However, we simultaneously recorded with a handheld camera. We prefaced the live demo footage with some practice session footage from earlier, to help show parts that didn't work right in the live demo. See our final judging video.

Photos

Detailed pictures of our landscape and robot are here.

Source Code

We have uploaded all of our source code, and are making it Open Source. We hope future teams will be able to advance our Ultrasonic Steering, and make a cool Mission Monitor or remote control application like we enjoyed making.

* All source code (Both NXT-G for Inspiration, and the monitoring and remote control apps.)

* Just the USsteering.rbt My Block

About Ultrasonic Steering

Some people have asked about our Ultrasonic Steering (US), so here is how we did it...

As we experimented with the idea, we found out that US is only going to work well in certain situations. First, because we found the sensors only have a "cone of view" of about 30 degrees, the target item has to be roughly in front of the robot. US is thus for closing in on an object, but the robot has to be pointed in the object's general direction first.

Second, the two ultrasonic sensors have to be spread far apart. Inspiration's sensors are 30cm apart, which means that in order to fit into base at the mission start, we needed to have them spring-loaded with rubber bands.

Third, the two sensors will interfere with each other if you are not careful. A signal from the left sensor that bounces off an object and hits the second sensor will give whacky values to both sensors. This problem is more obvious in this diagram:

On the left, the robot's sensors are confused because the signal bounces off the target at an angle. On the right, the signal tends to bounce directly back when it hits a round object, so each sensor receives its own signal back!

This is the My Block that does the actual steering:

- Archival Video

- Photos

- Source Code

- About Ultrasonic Steering

Archival Video

Our webcast on 2012-11-11 did not record. However, we simultaneously recorded with a handheld camera. We prefaced the live demo footage with some practice session footage from earlier, to help show parts that didn't work right in the live demo. See our final judging video.

Photos

Detailed pictures of our landscape and robot are here.

Source Code

We have uploaded all of our source code, and are making it Open Source. We hope future teams will be able to advance our Ultrasonic Steering, and make a cool Mission Monitor or remote control application like we enjoyed making.

* All source code (Both NXT-G for Inspiration, and the monitoring and remote control apps.)

* Just the USsteering.rbt My Block

About Ultrasonic Steering

Some people have asked about our Ultrasonic Steering (US), so here is how we did it...

As we experimented with the idea, we found out that US is only going to work well in certain situations. First, because we found the sensors only have a "cone of view" of about 30 degrees, the target item has to be roughly in front of the robot. US is thus for closing in on an object, but the robot has to be pointed in the object's general direction first.

Second, the two ultrasonic sensors have to be spread far apart. Inspiration's sensors are 30cm apart, which means that in order to fit into base at the mission start, we needed to have them spring-loaded with rubber bands.

Third, the two sensors will interfere with each other if you are not careful. A signal from the left sensor that bounces off an object and hits the second sensor will give whacky values to both sensors. This problem is more obvious in this diagram:

On the left, the robot's sensors are confused because the signal bounces off the target at an angle. On the right, the signal tends to bounce directly back when it hits a round object, so each sensor receives its own signal back!

This is the My Block that does the actual steering:

Every time this My Block is called, it compares values from the left and right ultrasonic sensors. It then adjusts the motors to steer slightly toward whichever sensor is closer to an object. Below the My Block is placed within a loop, which repeats until the touch sensor is triggered.

Monday, November 12, 2012

Successful Webcast!

We had a great webcast at the Museum of Flight yesterday. We'll post a version recorded from other cameras later this week, as well as all our robot and mission monitoring programs.

|

| From the West Seattle Herald |

How We Did:

We knew a month or two ago that we would never win the final round on actual game points :-) Our approach, using a realistic landscape (sandy, rocky, irregular) turned out to be even harder than we thought it would be, and we had to focus on doing just a few things (traverse ridge, pick up one item, try to get it home). But we were just happy to "push the envelope" and try something that's never been tried before in MoonBots. We've learned so much (about traction, torque, balance, effects of dust, etc.), we now consider ourselves the world's leading authorities on Mindstorms rovers for harsh, extraterrestrial environments :-)

How Inspiration Did:

Well, Inspiration had some stage fright, showing many more glitches than in the previous few days. The sensor arms failed to pop out several times, and we ended up hitting the wall more than usual. But it didn't do anything terrible, and we were able to demonstrate our ultrasonic steering, though not our complete, end-to-end mission as planned.

What the Crowd Thought:

We had a large, enthusiastic crowd at the Museum. We talked about MoonBots and GLXP to a lot of kids and adults, and we had at least thirty people drive Inspiration by joystick at the end, before we had to pack up. Even though the realistic landscape made things much harder for us, the crowd really, really liked the landscape. We think it really made the demonstration a simulation for them, something above a typical robot display. We've had two teachers ask us to demo at their schools, too!

Upcoming Video:

We're going to put together a video explaining all the technical challenges we ran into, what we tried, and how we ended up with our final design. Believe us, every beam and peg of Inspiration is there for a reason. Each part is a winner in a competition of mini-designs for each feature or function.

Hey, the West Seattle Herald had a nice story about our webcast, with a video interview. Thanks!

Sunday, November 11, 2012

The Big Day

Though we were first to sign up for today, we're now the last of *five* team webcasts the judges are watching today! Good luck to the teams, and good luck to the judges, too :-)

Our rover, "Inspiration", is ready at the Museum of Flight. 2:30pm Pacific.

Thursday, November 8, 2012

"Inspiration" Rover Looking Good!

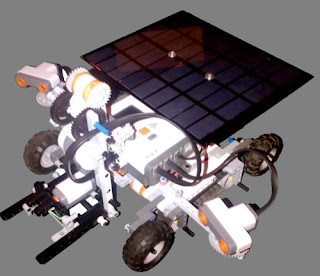

Three days until our webcast! Our rover, "Inspiration", has had many, many changes over the weeks, as we "refactor" the design to make it more reliable and simpler. Here it is in current form:

Last week we did an early preview for a 4th Grade class. Asa and Kyle practiced Q&A, and did a demonstration. We then hooked up our joystick and let some kids drive! Great questions from the kids, and great suggestions for improvements :-) Since that preview, we rebuilt the arm to deliver more torque, added "stabilizer wheels" to improve stability on slopes, and designed a new pop-out mechanism for the ultrasonic sensors. One more push this weekend before Sunday's webcast!

Friday, October 26, 2012

Clever Sensor Tricks for Sandy Lunar Surface

If you haven't read our team's proposal in detail, here is the most important thing, the thing that distinguishes our mission from every other team's, as far as we know: we are using a sandy, rocky landscape!

To implement ultrasonic steering, we concluded we have to use two ultrasonic sensors, just as you can close your eyes and use your two ears to locate where a sound is coming from. We think this is the first time binaural steering (like binocular, but for sound instead of light) has been successfully used in a LEGO robot competition.

Remember: our live webcast from the Museum of Flight is on Sunday, November 11th, 2012, at 2:30pm (Pacific).

Other teams are using flat table-tops, or LEGO(R) base plates for their lunar surfaces, as is the custom in FLL, and MoonBots (up to now, heh heh). We decided to propose a realistic landscape, covered with sand, occasional rocks, and a bumpy High Ridge. We feel this will really help the public "connect" with the mission during our live demonstration.

However, once you move to a sandy, rocky surface, you find that you cannot trust your motors they way you can on a flat table-top. You tell a wheel to make four rotations, and you might find the robot only went a distance equal to three and a half rotations, or worse.

Our strategy to deal with this is to use ultrasonic steering. We won't trust where our task items are on the landscape, we will find them in real time. Besides making the mission achievable, it also has a bonus feature: it makes the robot tolerant of errors. If the task item is a few inches away from where we humans thought it would be when programming the robot, the sensor-based steering will correct for that automatically!

To implement ultrasonic steering, we concluded we have to use two ultrasonic sensors, just as you can close your eyes and use your two ears to locate where a sound is coming from. We think this is the first time binaural steering (like binocular, but for sound instead of light) has been successfully used in a LEGO robot competition.

Remember: our live webcast from the Museum of Flight is on Sunday, November 11th, 2012, at 2:30pm (Pacific).

Subscribe to:

Posts (Atom)